- Cloud Database Insider

- Posts

- Critical zero-day flaw in MS SQL Server🔓|MariaDB acquires GridGain⚡|Build Your Own Data Lakehouse🤖

Critical zero-day flaw in MS SQL Server🔓|MariaDB acquires GridGain⚡|Build Your Own Data Lakehouse🤖

Deep Dive: A Look Into Microsoft Fabric, Part 4: Interoperability

What’s in today’s newsletter:

Critical Microsoft SQL Server zero-day threatens security 🔓

MariaDB acquires GridGain to speed AI inference ⚡

Build Your Own Data Lakehouse: DIY Cost Guide🤖

Validio Raises $30M to Boost Enterprise Data Management💵

Also, check out the weekly Deep Dive - MS Fabric Interoperability

Know What Matters in Tech Before It Hits the Mainstream

By the time AI news hits CNBC, CNN, Fox, and even social media, the info is already too late. What feels “new” to most people has usually been in motion for weeks — sometimes months — quietly shaping products, markets, and decisions behind the scenes.

Forward Future is a daily briefing for people who want to stay competitive in the fastest evolving technology shift we’ve ever seen. Each day, we surface the AI developments that actually matter, explain why they’re important, and connect them to what comes next.

We track the real inflection points: model releases, infrastructure shifts, policy moves, and early adoption signals that determine how AI shows up in the world — long before it becomes a talking point on TV or a trend on your feed.

It takes about five minutes to read.

The insight lasts all day.

SQL SERVER

TL;DR: A critical zero-day vulnerability in Microsoft SQL Server enables remote code execution, risking data breaches and network compromise; Microsoft plans a patch, while organizations must apply workarounds and enhance security monitoring.

A critical zero-day vulnerability in Microsoft SQL Server enables remote arbitrary code execution risks.

Attackers exploit specially crafted requests to access sensitive data or compromise entire networks.

Microsoft is expected to release an urgent patch, but interim workarounds and monitoring are essential.

The vulnerability presents a global threat, emphasizing the need for strong vulnerability management and incident response.

Why this matters: This zero-day in Microsoft SQL Server threatens critical data integrity and business operations worldwide, showing how foundational software vulnerabilities can be weaponized rapidly. Immediate patching and enhanced security vigilance are crucial to prevent widespread breaches and disruptions in enterprise environments dependent on this database platform.

RELATIONAL DATABASE

Source: StorageNewsletter

TL;DR: MariaDB acquired GridGain to reduce AI inference latency by integrating in-memory computing, enabling faster query responses and real-time decision-making, strengthening its competitive edge in AI-driven database solutions.

MariaDB acquired GridGain to tackle latency issues in AI inference workloads with faster data processing.

GridGain’s Apache Ignite-based in-memory platform boosts data access speeds by caching and processing in memory.

Integration enables MariaDB to offer faster query responses with combined persistent storage and in-memory processing.

The acquisition strengthens MariaDB’s position in AI databases, enhancing real-time decision-making and scalability for enterprises.

Why this matters: By acquiring GridGain, MariaDB significantly reduces AI inference latency, crucial for real-time analytics and decision-making. This integration boosts performance, making MariaDB more competitive and helping enterprises deploy scalable, efficient AI solutions that combine fast in-memory processing with reliable storage.

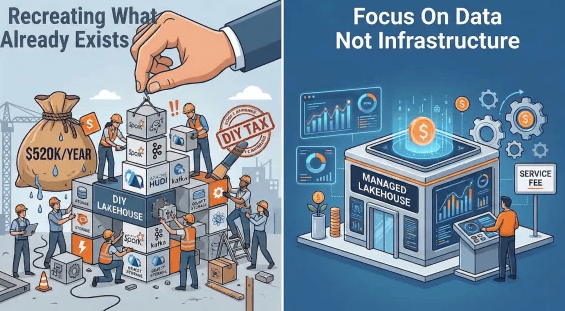

DATA ARCHITECTURE

Source: ILUM

TL;DR: Self-hosted data lakehouses, powered by open-source tools, offer scalable, cost-efficient, and secure alternatives to cloud solutions, enabling customizable data management but requiring skilled staff and operational investment.

Self-hosted data lakehouses combine data lake scalability with warehouse governance for unified data management platforms.

Rising cloud costs and data privacy concerns drive businesses toward DIY, on-premise data lakehouse solutions.

Open-source tools like Apache Iceberg, Hudi, and Delta Lake enable customizable, cost-efficient self-hosted data infrastructures.

The trend supports data control and innovation but demands skilled staff and operational setup efforts.

Why this matters: Self-hosted data lakehouses offer businesses a way to reduce cloud dependence, cut costs, and enhance data control amid privacy concerns. This shift empowers organizations, especially smaller or regulated ones, to tailor analytics infrastructure but also requires investment in expertise and management, signaling a move toward flexible, decentralized data systems.

DATA OBSERVABILITY

TL;DR: Validio raised $30 million to advance its enterprise data management platform, combining real-time processing and governance, aiming to expand and innovate with AI-driven automation amid rising market demand.

Validio raised $30 million to enhance its enterprise data management and integration platform.

The platform combines real-time data processing with governance for improved quality and accessibility.

Funding will support expansion, innovation, and automation with AI-driven features across industries.

Investment signals strong confidence in Validio’s vision amid growing demand for advanced data governance.

Why this matters: Validio's $30 million funding enables it to advance AI-driven, real-time data integration and governance, crucial for enterprises facing complex data challenges. This investment underscores rising demand for robust data management solutions, empowering businesses with accurate insights and operational efficiency that drive growth and competitive advantage.

EVERYTHING ELSE IN CLOUD DATABASES

Top 10 SQL Server Tools to Watch in 2026

MongoDB Boosts AI Features, Sparks Growth Hopes

Datastrike boosts Microsoft Fabric data services

Monte Carlo's agent boosts end-to-end observability

Oracle Q3 2026: Strong cloud growth reported

LeakyLooker finds 9 risks in Google Looker Studio

BigID, Atlan launch unified data catalog for AI

Data Architects Drive Smart Business Decisions

SQL Server 2025 Boosts Data Platform Power

Unity Catalog Unifies Data Discovery with Business Context

Snowflake Integrates Cortex AI with dbt, Airflow

Watsonx Data’s OpenRAG transforms unstructured AI data

Solidatus launches AI tool to automate data lineage

PointFive boosts cloud AI for Snowflake & BigQuery

Snowflake Adds Apache Iceberg v3 Support

BigQuery Pipeline Connection Setup Guide

Databricks Launches Genie Code, Acquires Quotient AI

DEEP DIVE

A detailed look into Microsoft Fabric, Part Four, Interoperability

In Microsoft Fabric, interoperability is not simply about offering a wide variety of connectors; it is the combination of a shared storage substrate, open data formats, multiple compute engines, and metadata-driven reuse that eliminates data silos and unnecessary duplication.

Here is a comprehensive overview of how Microsoft Fabric achieves interoperability across its ecosystem:

The Unified Storage Foundation: OneLake and Open Formats The core of Fabric’s architecture is OneLake, a single, unified logical data lake that acts as the "OneDrive for data" for an entire organization.

Delta Lake Standard: All tabular data in Fabric is stored natively in the open-source Delta Lake (Parquet) format. This standardization allows disparate compute engines—such as Apache Spark, T-SQL (Data Warehouse and SQL analytics endpoints), and Kusto Query Language (KQL)—to simultaneously read and write to the same physical data footprint without proprietary translation layers.

Apache Iceberg Interoperability: Fabric extends its open-format commitment through bidirectional metadata virtualization, allowing Apache Iceberg tables to be interpreted as Delta tables and vice versa, enabling seamless cross-platform catalog integration.

Zero-Copy Data Access and Virtualization Fabric minimizes data duplication through two primary virtualization and replication mechanisms:

Shortcuts: Shortcuts act as virtual pointers to data stored internally in Fabric or externally in clouds like Azure Data Lake Storage (ADLS) Gen2, Amazon S3, Google Cloud Storage (GCS), and Dataverse. When an analytical engine queries a shortcut, it performs an in-place I/O operation against the source, bypassing the need to copy data into OneLake.

Mirroring: For operational databases (such as Azure Cosmos DB, Azure SQL, Snowflake, and PostgreSQL), mirroring provides continuous, near real-time replication into OneLake. It converts transactional data into analytics-ready Delta tables and automatically generates a SQL analytics endpoint for immediate T-SQL querying, eliminating complex ETL pipelines.

Cross-Engine and Business Intelligence (BI) Integration Fabric decouples storage from compute, allowing specialized engines to interact with the same data.

Direct Lake Mode: This feature bridges data engineering and business intelligence by allowing the Power BI VertiPaq engine to read Delta Parquet files directly from OneLake. It delivers the high performance of cached "Import mode" without the latency and management overhead of actually copying or refreshing the data.

GraphQL API: For application developers, Fabric provides a unified API for GraphQL. This layer abstracts backend complexities, allowing applications to query data across lakehouses, warehouses, and SQL databases in a single API call without requiring custom backend code.

Cross-Platform and Cross-Tenant Collaboration Fabric is designed to integrate deeply with the broader external data ecosystem and across organizational boundaries:

Databricks and Snowflake Partnerships: Fabric allows Azure Databricks Unity Catalog to query OneLake data directly without copying it. Similarly, bidirectional interoperability with Snowflake allows Fabric workloads to run directly on Snowflake-managed Iceberg tables via shortcuts.

External Data Sharing: Organizations can share data from their OneLake in-place with users in an entirely different Fabric tenant. This creates a read-only shortcut in the consumer's tenant, allowing secure cross-company collaboration without duplicating datasets.

Unified Security and Governance Maintaining governance across multiple engines is a historical challenge that Fabric addresses through integrated security layers:

Microsoft Purview: Built directly into Fabric, Purview provides centralized data discovery, end-to-end lineage tracking, Data Loss Prevention (DLP), and sensitivity labels that automatically follow data as it moves or is exported.

OneSecurity (OneLake Security): Microsoft is actively unifying data access controls through OneLake Security. This data-plane role-based access control (RBAC) model defines security rules (such as folder, table, row, and column-level security) centrally in OneLake. Once defined, these rules are consistently enforced regardless of whether the user accesses the data via Spark, T-SQL, or Power BI.

Gladstone Benjamin

🚀 Work With Cloud Database Insider

Looking to reach enterprise data engineers and architects?

Limited sponsorship slots available each month.